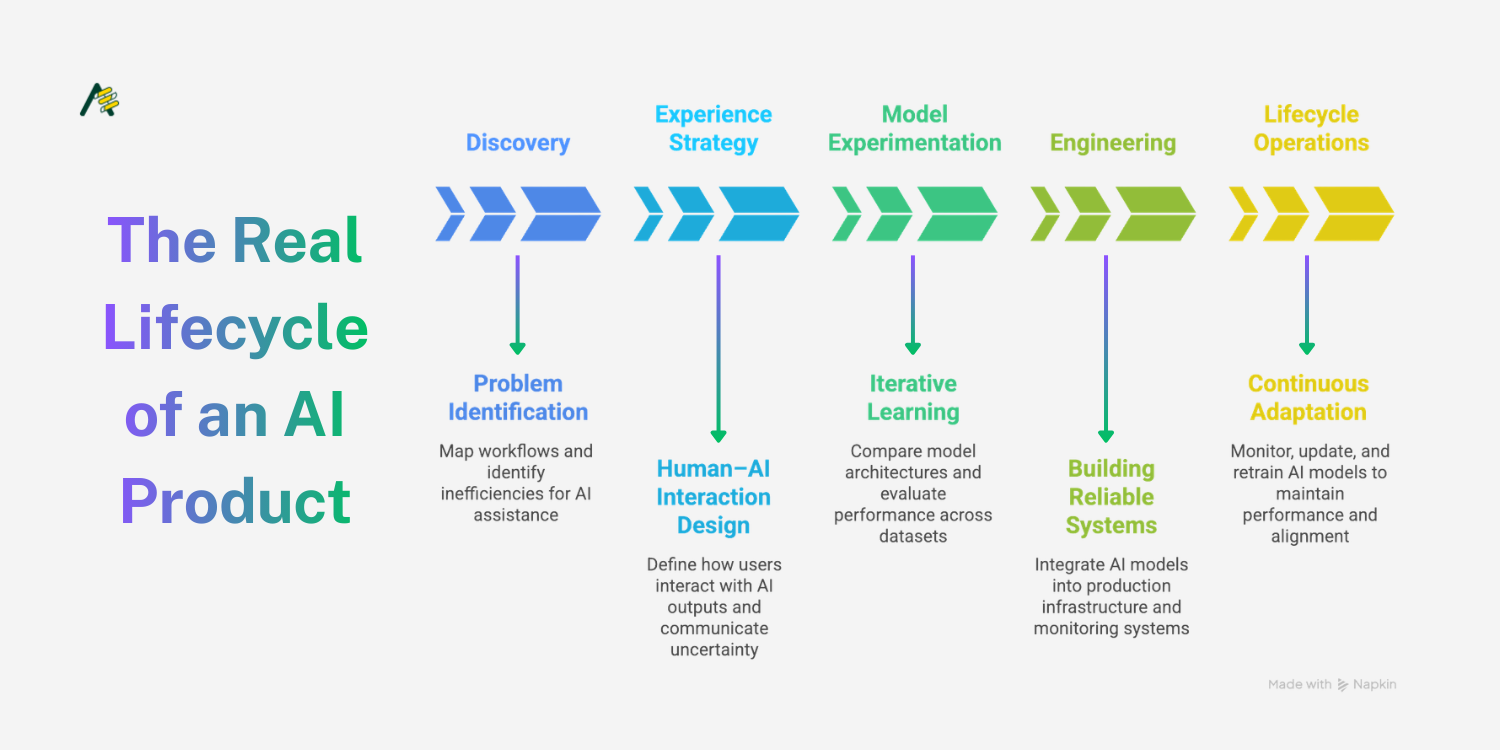

The Real Lifecycle of an AI Product

Geetanjali Shrivastava

Mar 4, 2026 · 4 min read

Artificial intelligence products often appear deceptively simple from the outside: A user interacts with a chatbot, recommendation engine, or automation feature, and the underlying system feels seamless. In reality, successful AI products emerge from a complex lifecycle that extends far beyond model training.

Many organisations still approach AI as a research initiative rather than a product discipline. The result is a recurring pattern: promising prototypes that struggle to translate into reliable production systems. Understanding the full lifecycle of AI development helps teams design systems that move from experimentation to sustainable deployment.

Discovery: Identifying the Right Problems

AI initiatives often begin with technology rather than user problems. Teams start with a model capability - classification, generation, prediction - and search for places to apply it.

A more effective approach begins with discovery. This stage examines workflows, decision points, and inefficiencies where machine intelligence could meaningfully assist users. The focus is not on whether a model can technically perform a task, but whether the task improves when assisted by AI.

Discovery work typically includes:

Mapping decision processes and user workflows

Identifying areas with high cognitive load or repetitive analysis

Assessing the availability and reliability of relevant data

Without this groundwork, teams risk building AI features that function technically but provide limited value in practice.

Experience Strategy: Designing Human–AI Interaction

AI systems rarely operate independently. Most are embedded within broader workflows where humans interpret, validate, or refine outputs.

Designing this interaction is a critical stage in the lifecycle. Poorly designed interfaces often undermine otherwise strong models. For example, a recommendation engine that cannot communicate confidence levels or reasoning may create friction rather than efficiency.

Experience strategy addresses questions such as:

When should AI act autonomously versus providing suggestions?

How should uncertainty or confidence be communicated?

What level of transparency do users require to trust the system?

Effective AI products treat model outputs as part of a user experience rather than an isolated technical function.

Model Experimentation: Iterative Learning

Once the problem and experience design are defined, teams can begin model experimentation. At this stage, the objective is not simply to train a model, but to evaluate multiple approaches and understand their trade-offs.

Experimentation typically involves:

Comparing model architectures and training approaches

Evaluating performance across diverse datasets

Stress-testing models under edge cases and unexpected inputs

Metrics during this phase should reflect real-world use cases. A model with strong benchmark scores may still fail when deployed in dynamic environments.

The experimentation phase also reveals an important insight: model accuracy alone rarely determines product success. Latency, reliability, and interpretability often carry equal weight in practical systems.

Engineering: Building Reliable Systems

The transition from experimentation to engineering is where many AI projects stall. A model that performs well in controlled experiments must now operate as part of a broader system.

Production engineering introduces additional considerations:

Data pipelines for continuous input streams

Infrastructure capable of scaling inference workloads

Monitoring systems to detect performance drift

Integration with existing software platforms

Engineering decisions influence everything from response times to operational costs. These factors ultimately determine whether an AI feature can operate reliably in real-world environments.

Lifecycle Operations: Continuous Adaptation

Unlike traditional software features, AI systems evolve after deployment. Data distributions shift, user behavior changes, and new edge cases emerge over time.

Lifecycle operations ensure systems remain stable and effective through:

Monitoring model performance and error rates

Updating training data as new patterns appear

Retraining models to maintain accuracy

This phase also introduces governance considerations, particularly when AI systems influence financial, educational, or healthcare decisions.

Organisations that treat AI as static software often encounter gradual degradation in model quality. Continuous evaluation and iteration help maintain alignment between models and real-world conditions.

Integrating the Full Cycle

AI product development requires collaboration across multiple disciplines. Product strategy, design, machine learning, and engineering all influence outcomes at different stages of the lifecycle.

When these stages operate in isolation, progress slows and knowledge fragments across teams. A more integrated approach allows insights from experimentation to inform product design, while operational data shapes future iterations.

The lifecycle perspective encourages teams to treat AI systems as evolving products rather than isolated technical artefacts. With this mindset, organisations can move beyond experimentation and build systems that deliver consistent value over time.

Geetanjali Shrivastava

@geetanjalishrivastava