Trust, Risk, and Responsible AI in FinTech

Geetanjali Shrivastava

Mar 5, 2026 · 3 min read

Financial technology has undergone significant transformation over the past decade. Digital banking platforms, algorithmic credit scoring systems, and automated investment tools now play central roles in financial services.

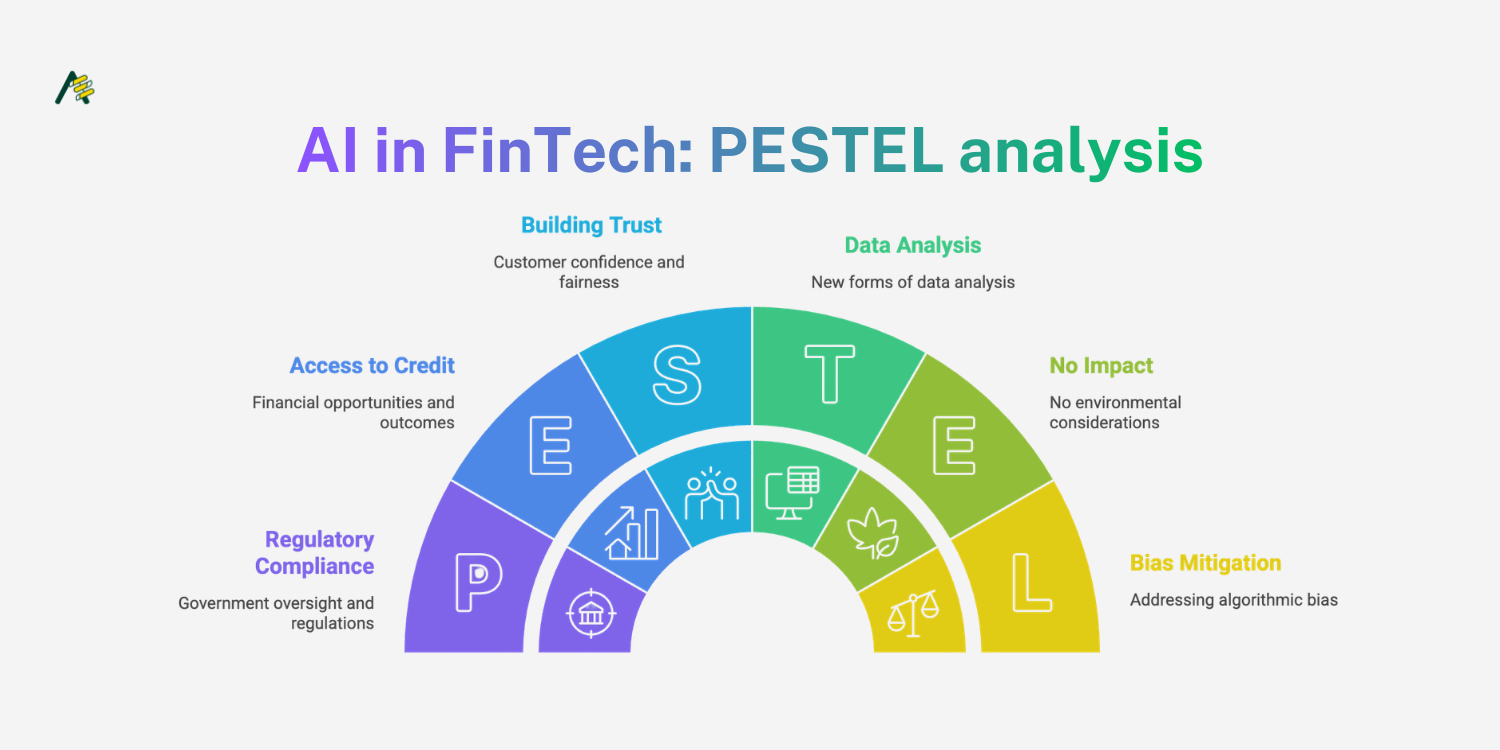

Artificial intelligence is accelerating this transformation by enabling new forms of data analysis and decision-making. However, financial systems operate within environments where trust and risk management are essential.

As AI adoption expands across the financial sector, responsible deployment becomes a critical priority.

The Role of AI in Financial Decision-Making

AI systems are increasingly used to evaluate credit risk, detect fraud, and provide financial recommendations. These models can process large volumes of data more quickly than traditional analytical methods.

For example, machine learning algorithms may analyze transaction patterns to identify unusual activity or evaluate creditworthiness based on alternative data sources.

While these capabilities offer efficiency advantages, they also introduce new forms of risk. Financial decisions made by automated systems can affect access to credit, investment outcomes, and economic opportunities.

Ensuring that these systems operate fairly and transparently is therefore essential.

Algorithmic Bias in Financial Systems

One of the primary concerns associated with AI in FinTech is the potential for algorithmic bias.

Machine learning models learn patterns from historical data. If historical datasets contain biases or structural inequalities, models may reproduce those patterns in their predictions.

In credit scoring systems, for example, models trained on incomplete or biased datasets may unintentionally disadvantage certain demographic groups.

Addressing this issue requires careful dataset design and ongoing evaluation of model outputs. Financial institutions must regularly audit AI systems to identify patterns that could indicate unintended bias.

Transparency and Explainability

Financial decisions often require clear explanations. Customers, regulators, and financial institutions themselves need to understand how conclusions were reached.

Complex machine learning models can make this process difficult, particularly when models operate as black boxes.

To address this challenge, many organisations are investing in explainable AI techniques. These methods aim to provide insight into the factors influencing model predictions.

Explainability tools can help analysts identify which variables contributed to a credit assessment or risk classification. This transparency supports both internal oversight and regulatory compliance.

Regulatory Expectations

Financial services operate within highly regulated environments. As AI systems become more prevalent, regulators are paying closer attention to how these technologies influence decision-making.

Regulatory frameworks increasingly emphasize:

Documentation of model development processes

Evidence of bias mitigation strategies

Ongoing monitoring of model performance

Financial institutions deploying AI systems must therefore maintain clear records of how models were trained, evaluated, and updated over time.

These practices help ensure accountability and allow organizations to respond effectively to regulatory inquiries.

Managing Operational Risk

AI systems also introduce operational considerations beyond fairness and transparency.

Financial institutions must ensure that AI-driven systems remain reliable under varying conditions. This includes monitoring model performance, detecting data drift, and maintaining infrastructure capable of supporting real-time decision-making.

Risk management teams play an important role in evaluating how AI models interact with existing financial processes.

Stress testing and scenario analysis can help institutions understand how models behave under unusual circumstances, such as market volatility or sudden shifts in transaction patterns.

Building Trust in AI-Enabled Financial Systems

Trust is fundamental to financial services. Customers must feel confident that systems operate consistently and fairly.

Responsible AI practices contribute to this trust by combining technical rigor with governance frameworks.

Organizations adopting AI in FinTech increasingly rely on multidisciplinary teams that include engineers, risk analysts, compliance specialists, and financial experts.

This collaborative approach helps ensure that AI systems align with both technological capabilities and financial responsibility.

As AI continues to influence financial infrastructure, maintaining this balance between innovation and accountability will remain central to the evolution of FinTech.

Geetanjali Shrivastava

@geetanjalishrivastava